Simplify and Streamline Data Transfers by Leveraging EFT as Your "Big Data" Gateway to Hadoop

Big data is a collection of data sets so large and complex that it becomes difficult to process using existing database management tools or traditional data processing applications. This influx of data sets also brings the challenges of capture, curation, storage, analysis, and visualization along with it. As businesses work with an increasing number of partners, the size of the data being exchanged increases in terabytes or exabytes in size. The Apache Hadoop Big Data Platform was built as a “big data tool” in order to assist with these large exchanges.

What is the Hadoop File System?

The Apache Hadoop software library is an open source framework that allows for the distributed processing of large data sets across clusters of computers using simple programming models. It is designed to scale up from single servers to thousands of machines, each offering local computation as well as storage. Rather than rely on hardware to deliver high availability, the library itself is designed to detect and handle failures at the application layer. Deploying a highly available service on top of a cluster of computers can prevent the computers from failing.

How Can Globalscape Help?

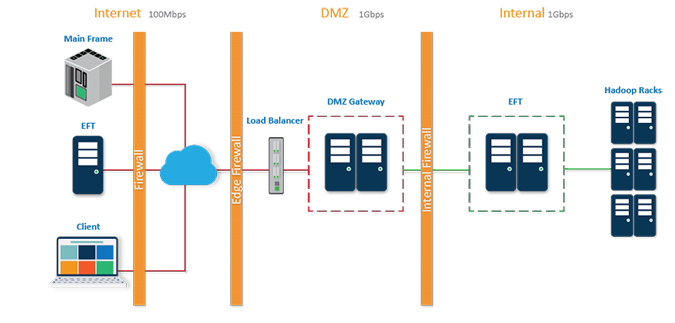

Globalscape Enhanced File Transfer™ (EFT™) platform and DMZ Gateway® together provide a simple, reliable, secure, and streamlined process for your data’s gateway to Hortonworks Data Platform (HDP), maintaining security, visibility, and compatibility with Internet open standard protocols.

A single instance of EFT Enterprise in the internal network layer of the datacenter and a single instance of the DMZ Gateway component installed in the DMZ (edge, outward facing) network layer can be used to consolidate data generated from multiple locations into one central data center with a Hadoop cluster. The EFT Enterprise located in the internal network transfers data into Hadoop, and maintains security, tracking, and auditing of every file transferred.